The 19th annual San Francisco Electronic Music Festival concluded yesterday, and we at CatSynth were on hand for the final concert. There were three sets, each showcasing different currents within electronic music, but they all shared a minimalist approach to their musical expression and presentation.

The evening opened with a set by Andy Puls, a composer, performer and designer of audio/visual instruments based out of Richmond, California. We had seen one of his latest inventions, the Melody Oracle, at Outsound’s Touch the Gear (you can see him demonstrating the instrument in our video from the event). For this concert, he brought the Melody Oracle into full force with additional sound and visuals that filled the stage with every changing light and sound.

The performance started off very sparse and minimal, with simple tones corresponding to lights. Combining tones resulted in combining lights and the creation of colors from the original RGB sources. As the music grew increasingly complex, the light alternated between the solid colors and moving patterns.

I liked the sound and light truly seemed to go together, separate lines in a single musical phrase, and a glimpse of what music would be if it was done with light rather than sound.

OMMO, the duo of Julie Moon and Adria Otte brought an entirely different sound and presence to the stage.

The performance explored the “complexities and histories of the Korean diaspora and their places within it.” And indeed, words and music moved freely back and forth between traditional and abstract sounds and Korean and English words. Moon’s voice was powerful and evocative, and quite versatile in range and she moved through these different ideas. The processing on her voice, including delays and more complex effects, was crisp and sounded like an extension of her presence. Otte performed on laptop and analog electronics, delivering a solid foundation and complex interplay. A truly dynamic and captivating performance.

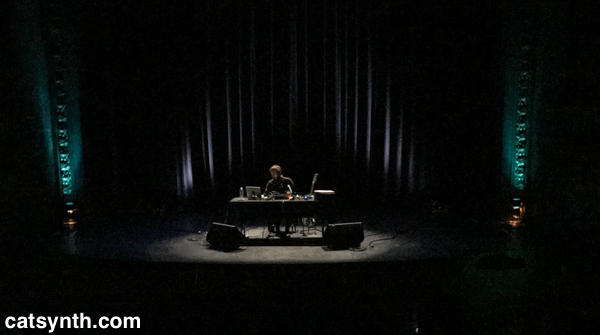

The final set featured a solo performance Paris-based Kassel Jaeger, who recently became director of the prestigious Groupe de Recherches Musicales (GRM). Sitting behind a table on a darkened stage, with a laptop, guitar and additional electronics, he brought forth an eerie soundscape.

The music featured drone sounds, with bits of recognizable recorded material, as well as chords and sharp accents. The musique concrète influence was abundant but also subtle at times as any source material was often submerged in complex pads and clouds over which Jaeger performed improvisations.

It is sometimes difficult to describe these performances in words, though we at CatSynth try our best to do so. Fortunately, our friends at SFEMF shared some clips of each set in this Instagram post.

Much was also made of the fact that this was the 19th year of the festival. That is quite an achievement! And we look forward to what they bring forth for the 20th next year…

The exhibition was titled “Her Being and Nothingness” and featured a series of self portraits. In each image, the focus is on “the body.” The face is either absent or obscured, and the poses and attire vary in each. We of course know they are self portraits (itself an interesting concept in photography), but without the usual cues for identity. In this case, we draw the conclusion directly from the bodies.

The exhibition was titled “Her Being and Nothingness” and featured a series of self portraits. In each image, the focus is on “the body.” The face is either absent or obscured, and the poses and attire vary in each. We of course know they are self portraits (itself an interesting concept in photography), but without the usual cues for identity. In this case, we draw the conclusion directly from the bodies.

I did take some time out of today’s preparations to return to the conference for the

I did take some time out of today’s preparations to return to the conference for the